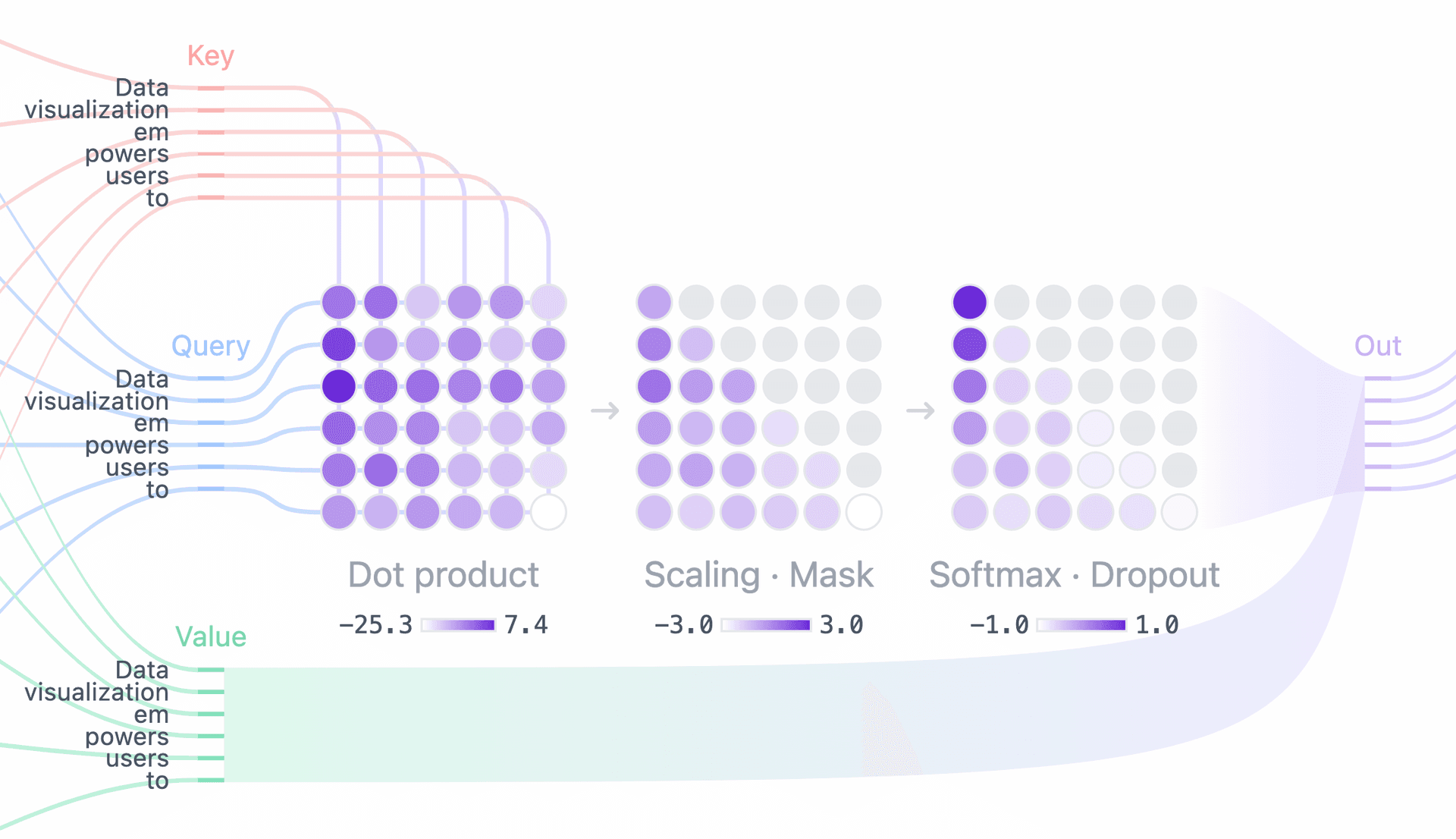

Visualize how transformer architecture generates an LLM's outputs

Transformer architecture is the neural network framework upon which large language models predict and generate text based on user inputs. This interactive lets you explore the "thinking" a GPT does to generate responses based on your input.